Artificial Intelligence

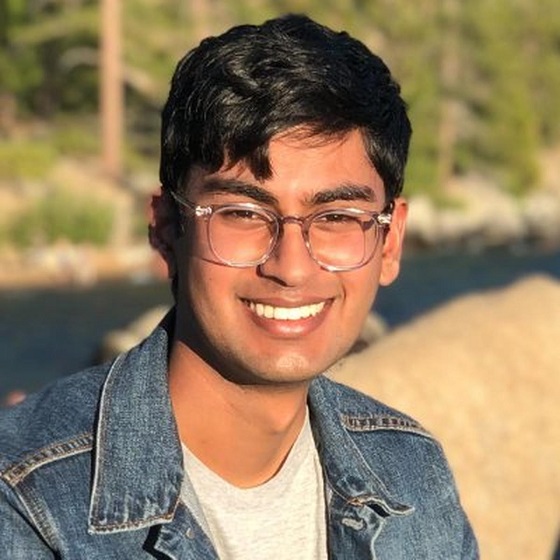

Death of an Open A.I. Whistleblower

By John Leake

Suchir Balaji was trying to warn the world of the dangers of Open A.I. when he was found dead in his apartment. His story suggests that San Francisco has become an open sewer of corruption.

According to Wikipedia:

Suchir Balaji (1998 – November 26, 2024) was an artificial intelligence researcher and former employee of OpenAI, where he worked from 2020 until 2024. He gained attention for his whistleblowing activities related to artificial intelligence ethics and the inner workings of OpenAI.

Balaji was found dead in his home on November 26, 2024. San Francisco authorities determined the death was a suicide, though Balaji’s parents have disputed the verdict.

Balaji’s mother just gave an extraordinary interview with Tucker Carlson that is well worth watching.

A quick Google search of Mr. Serrano Sewell resulted in a Feb. 8, 2024 report in the San Francisco Standard headlined San Francisco official likely tossed out human skull, lawsuit says. According to the report:

The disappearance of a human skull has spurred a lawsuit against the top administrator of San Francisco’s medical examiner’s office from an employee who alleges she faced retaliation for reporting the missing body part.

Sonia Kominek-Adachi alleges in a lawsuit filed Monday that she was terminated from her job as a death investigator after finding that the executive director of the office, David Serrano Sewell, may have “inexplicably” tossed the skull while rushing to clean up the office ahead of an inspection.

Kominek-Adachi made the discovery in January 2023 while doing an inventory of body parts held by the office, her lawsuit says. Her efforts to raise an alarm around the missing skull allegedly led up to her firing last October.

If the allegations of this lawsuit are true, they suggest that Mr. Serrano is an unscrupulous and vindictive man. According to the SF Gov website:

Serrano Sewell joined the OCME with over 16 years of experience developing management structures, building consensus, and achieving policy improvements in the public, nonprofit, and private sectors. He previously served as a Mayor’s aide, Deputy City Attorney, and a policy advocate for public and nonprofit hospitals.

In other words, he is an old denizen of the San Francisco city machine. If a mafia-like organization has penetrated the city administration, it would be well-served by having a key player run the medical examiner’s office.

According to Balaji’s mother, Poornima Ramarao, his death was an obvious murder that was crudely staged to look like a suicide. The responding police officers only spent forty minutes examining the scene, and then left the body in the apartment to be retrieved by medical examiner field agents the next day. If true, this was an act of breathtaking negligence.

I have written a book about two murders that were staged to look like suicides, and to me, Mrs. Ramarao’s story sounds highly credible. Balaji kept a pistol in his apartment for self defense because he felt that his life was possibly in danger. He was found shot in the head with this pistol, which was purportedly found in his hand. If his death was indeed a murder staged to look like a suicide, it raises the suspicion that the assailant knew that Balaji possessed this pistol and where he kept it in his apartment.

Balaji was found with a gunshot wound to his head—fired from above, the bullet apparently traversing downward through his face and missing his brain. However, he had also sustained what—based on his mother’s testimony—sounds like a blunt force injury on the left side of the head, suggesting a right-handed assailant initially struck him with a blunt instrument that may have knocked him unconscious or stunned him. The gunshot was apparently inflicted after the attack with the blunt instrument.

A fragment of a bloodstained whig found in the apartment suggests the assailant wore a whig in order to disguise himself in the event he was caught in a surveillance camera placed in the building’s main entrance. No surveillance camera was positioned over the entrance to Balaji’s apartment.

How did the assailant enter Balaji’s apartment? Did Balaji know the assailant and let him in? Alternatively, did the assailant somehow—perhaps through a contact in the building’s management—obtain a key to the apartment?

All of these questions could probably be easily answered with a proper investigation, but it sounds like the responding officers hastily concluded it was a suicide, and the medical examiner’s office hastily confirmed their initial perception. If good crime scene photographs could be obtained, a decent bloodstain pattern analyst could probably reconstruct what happened to Balaji.

Vernon J. Geberth, a retired Lieutenant-Commander of the New York City Police Department, has written extensively about how homicides are often erroneously perceived to be suicides by responding officers. The initial perception of suicide at a death scene often results in a lack of proper analysis. His essay The Seven Major Mistakes in Suicide Investigation should be required reading of every police officer whose job includes examining the scenes of unattended deaths.

However, judging by his mother’s testimony, Suchir Balaji’s death was obviously a murder staged to look like a suicide. Someone in a position of power decided it was best to perform only the most cursory investigation and to rule the manner of death suicide based on the mere fact that the pistol was purportedly found in the victim’s hand.

Readers who are interested in learning more about this kind of crime will find it interesting to watch my documentary film in which I examine two murders that were staged to look like suicides. Incidentally, the film is now showing in the Hollywood North International Film Festival. Please click on the image below to watch the film.

If you don’t have a full forty minutes to spare to watch the entire picture, please consider devoting just one second of your time to click on the vote button. Many thanks!

Subscribe to Courageous Discourse™ with Dr. Peter McCullough & John Leake.

For the full experience, upgrade your subscription.

Artificial Intelligence

Trump’s New AI Focused ‘Manhattan Project’ Adds Pressure To Grid

From the Daily Caller News Foundation

Will America’s electricity grid make it through the impending winter of 2025-26 without suffering major blackouts? It’s a legitimate question to ask given the dearth of adequate dispatchable baseload that now exists on a majority of the major regional grids according to a new report from the North American Electric Reliability Corporation (NERC).

In its report, NERC expresses particular concern for the Texas grid operated by the Electric Reliability Council of Texas (ERCOT), where a rapid buildout of new, energy hogging AI datacenters and major industrial users is creating a rapid increase in electricity demand. “Strong load growth from new data centers and other large industrial end users is driving higher winter electricity demand forecasts and contributing to continued risk of supply shortfalls,” NERC notes.

Texas, remember, lost 300 souls in February 2021 when Winter Storm Uri put the state in a deep freeze for a week. The freezing temperatures combined with snowy and icy conditions first caused the state’s wind and solar fleets to fail. When ERCOT implemented rolling blackouts, they denied electricity to some of the state’s natural gas transmission infrastructure, causing it to freeze up, which in turn caused a significant percentage of natural gas power plants to fall offline. Because the state had already shut down so much of its once formidable fleet of coal-fired plants and hasn’t opened a new nuclear plant since the mid-1980s, a disastrous major blackout that lingered for days resulted.

Dear Readers:

As a nonprofit, we are dependent on the generosity of our readers.

Please consider making a small donation of any amount here.

Thank you!

This country’s power generation sector can either get serious about building out the needed new thermal capacity or disaster will inevitably result again, because demand isn’t going to stop rising anytime soon. In fact, the already rapid expansion of the AI datacenter industry is certain to accelerate in the wake of President Trump’s approval on Monday of the Genesis Mission, a plan to create another Manhattan Project-style partnership between the government and private industry focused on AI.

It’s an incredibly complex vision, but what the Genesis Mission boils down to is an effort to build an “integrated AI platform” consisting of all federal scientific datasets to which selected AI development projects will be provided access. The concept is to build what amounts to a national brain to help accelerate U.S. AI development and enable America to remain ahead of China in the global AI arm’s race.

So, every dataset that is currently siloed within DOE, NASA, NSF, Census Bureau, NIH, USDA, FDA, etc. will be melded into a single dataset to try to produce a sort of quantum leap in AI development. Put simply, most AI tools currently exist in a phase of their development in which they function as little more than accelerated, advanced search tools – basically, they’re in the fourth grade of their education path on the way to obtaining their doctorate’s degree. This is an effort to invoke a quantum leap among those selected tools, enabling them to figuratively skip eight grades and become college freshmen.

Here’s how the order signed Monday by President Trump puts it: “The Genesis Mission will dramatically accelerate scientific discovery, strengthen national security, secure energy dominance, enhance workforce productivity, and multiply the return on taxpayer investment into research and development, thereby furthering America’s technological dominance and global strategic leadership.”

It’s an ambitious goal that attempts to exploit some of the same central planning techniques China is able to use to its own advantage.

But here’s the thing: Every element envisioned in the Genesis Mission will require more electricity: Much more, in fact. It’s a brave new world that will place a huge amount of added pressure on power generation companies and grid managers like ERCOT. Americans must hope and pray they’re up to the task. Their track records in this century do not inspire confidence.

David Blackmon is an energy writer and consultant based in Texas. He spent 40 years in the oil and gas business, where he specialized in public policy and communications.

Artificial Intelligence

Google denies scanning users’ email and attachments with its AI software

From LifeSiteNews

Google claims that multiple media reports are misleading and that nothing has changed with its service.

Tech giant Google is claiming that reports earlier this week released by multiple major media outlets are false and that it is not using emails and attachments to emails for its new Gemini AI software.

Fox News, Breitbart, and other outlets published stories this week instructing readers on how to “stop Google AI from scanning your Gmail.”

“Google shared a new update on Nov. 5, confirming that Gemini Deep Research can now use context from your Gmail, Drive and Chat,” Fox reported. “This allows the AI to pull information from your messages, attachments and stored files to support your research.”

Breitbart likewise said that “Google has quietly started accessing Gmail users’ private emails and attachments to train its AI models, requiring manual opt-out to avoid participation.”

Breitbart pointed to a press release issued by Malwarebytes that said the company made the changed without users knowing.

After the backlash, Google issued a response.

“These reports are misleading – we have not changed anyone’s settings. Gmail Smart Features have existed for many years, and we do not use your Gmail content for training our Gemini AI model. Lastly, we are always transparent and clear if we make changes to our terms of service and policies,” a company spokesman told ZDNET reporter Lance Whitney.

Malwarebytes has since updated its blog post to now say they “contributed to a perfect storm of misunderstanding” in their initial reporting, adding that their claim “doesn’t appear to be” true.

But the blog has also admitted that Google “does scan email content to power its own ‘smart features,’ such as spam filtering, categorization, and writing suggestions. But this is part of how Gmail normally works and isn’t the same as training Google’s generative AI models.”

Google’s explanation will likely not satisfy users who have long been concerned with Big Tech’s surveillance capabilities and its ongoing relationship with intelligence agencies.

“I think the most alarming thing that we saw was the regular organized stream of communication between the FBI, the Department of Homeland Security, and the largest tech companies in the country,” journalist Matt Taibbi told the U.S. Congress in December 2023 during a hearing focused on how Twitter was working hand in glove with the agency to censor users and feed the government information.

If you use Google and would like to turn off your “smart features,” click here to visit the Malwarebytes blog to be guided through the process with images. Otherwise, you can follow these five steps courtesy of Unilad Tech.

- Open Gmail on Desktop and press the cog icon in the top right to open the settings

- Select the ‘Smart Features’ setting in the ‘General’ section

- Turn off the ‘Turn on smart features in Gmail, Chat, and Meet’

- Find the Google Workplace smart features section and opt to manage the smart feature settings

- Switch off ‘Smart features in Google Workspace’ and ‘Smart features in other Google products’

On November 11, a class action lawsuit was filed against Google in the U.S. District Court for the Northern District of California. The case alleges that Google violated the state’s Invasion of Privacy Act by discreetly activating Gemini AI to scan Gmail, Google Chat, and Google Meet messages in October 2025 without notifying users or seeking their consent.

-

Business2 days ago

Business2 days agoBlacked-Out Democracy: The Stellantis Deal Ottawa Won’t Show Its Own MPs

-

Agriculture1 day ago

Agriculture1 day agoHealth Canada pauses plan to sell unlabeled cloned meat

-

COVID-192 days ago

COVID-192 days agoCrown seeks to punish peaceful protestor Chris Barber by confiscating his family work truck “Big Red”

-

Crime1 day ago

Crime1 day agoFBI Seizes $13-Million Mercedes Unicorn From Ryan Wedding’s Narco Network

-

Crime1 day ago

Crime1 day agoB.C.’s First Money-Laundering Sentence in a Decade Exposes Gaps in Global Hub for Chinese Drug Cash

-

Banks1 day ago

Banks1 day agoThe Bill Designed to Kill Canada’s Fossil Fuel Sector

-

International1 day ago

International1 day agoAmerica first at the national parks: Trump hits Canadians and other foreign visitors with $100 fee

-

armed forces24 hours ago

armed forces24 hours ago2025 Federal Budget: Veterans Are Bleeding for This Budget