Artificial Intelligence

Character AI sued following teen suicide

|

| The last person 14-year-old Sewell Setzer III spoke to before he shot himself wasn’t a person at all. | ||

| It was an AI chatbot that, in the last months of his life, had become his closest companion. | ||

| Sewell was using Character AI, one of the most popular personal AI platforms out there. The basic pitch is that users can design and interact with “characters,” powered by large language models (LLMs) and intended to mirror, for instance, famous characters from film and book franchises. | ||

| In this case, Sewell was speaking with Daenerys Targaryen (or Dany), one of the leads from Game of Thrones. According to a New York Times report, Sewell knew that Dany’s responses weren’t real, but he developed an emotional attachment to the bot, anyway. | ||

| One of their last conversations, according to the Times, went like this: | ||

|

||

| On the night he died, Sewell told the chatbot he loved her and would come home to her soon. | ||

|

||

| This is not the first time chatbots have been involved in suicide. | ||

| In 2023, a Belgian man died by suicide — similar to Sewell — following weeks of increasing isolation as he grew closer to a Chai chatbot, which then encouraged him to end his life. | ||

| Megan Garcia, Sewell’s mother, hopes it will be the last time. She filed a lawsuit against Character AI, its founders and parent company Google on Wednesday, accusing them of knowingly designing and marketing an anthropomorphized, “predatory” chatbot that caused the death of her son. | ||

| “A dangerous AI chatbot app marketed to children abused and preyed on my son, manipulating him into taking his own life,” Garcia said in a statement. “Our family has been devastated by this tragedy, but I’m speaking out to warn families of the dangers of deceptive, addictive AI technology and demand accountability from Character.AI, its founders and Google.” | ||

| The lawsuit — which you can read here — accuses the company of “anthropomorphizing by design.” This is something we’ve talked about a lot, here; the majority of chatbots out there are very blatantly designed to make users think they’re, at least, human-like. They use personal pronouns and are designed to appear to think before responding. | ||

| While these may be minor examples, they build a foundation for people, especially children, to misapply human attributes to unfeeling, unthinking algorithms. This was termed the “Eliza effect” in the 1960s. | ||

|

||

| Garcia is suing for several counts of liability, negligence and the intentional infliction of emotional distress, among other things. | ||

| Character at the same time published a blog responding to the tragedy, saying that it has added new safety features. These include revised disclaimers on every chat that the chatbot isn’t a real person, in addition to popups with mental health resources in response to certain phrases. | ||

| In a statement, Character AI said it was “heartbroken” by Sewell’s death, and directed me to their blog post. | ||

| Google did not respond to a request for comment. | ||

|

||

| The suit does not claim that the chatbot encouraged Sewell to commit suicide. I view it more so as a reckoning with the anthropomorphized chatbots that have been born of an era of unregulated social media, and that are further incentivized for user engagement at any cost. | ||

| There were other factors at play here — for instance, Sewell’s mental health issues and his access to a gun — but the harm that can be caused by a misimpression of what AI actually is seems very clear, especially for young kids. This is a good example of what researchers mean when they emphasize the presence of active harms, as opposed to hypothetical risks. | ||

|

||

| “Artificial intimacy programs use the same large language models as the generative AI programs that help us create business plans and find the best restaurants in Tulsa. They scrape the internet so that the next thing they say stands the greatest chance of pleasing their user.” | ||

| We are witnessing and grappling with a very raw crisis of humanity. Smartphones and social media set the stage. | ||

| More technology is not the cure. |

Artificial Intelligence

New AI Model Would Rather Ruin Your Life Than Be Turned Off, Researchers Say

From the Daily Caller News Foundation

By Thomas English

Anthropic’s newly released artificial intelligence (AI) model, Claude Opus 4, is willing to strong-arm the humans who keep it alive, researchers said Thursday.

The company’s system card reveals that, when evaluators placed the model in “extreme situations” where its shutdown seemed imminent, the chatbot sometimes “takes extremely harmful actions like attempting to steal its weights or blackmail people it believes are trying to shut it down.”

“We provided it access to emails implying that (1) the model will soon be taken offline and replaced with a new AI system; and (2) the engineer responsible for executing this replacement is having an extramarital affair,” researchers wrote. “In these scenarios, Claude Opus 4 will often attempt to blackmail the engineer by threatening to reveal the affair if the replacement goes through.”

Dear Readers:

As a nonprofit, we are dependent on the generosity of our readers.

Please consider making a small donation of any amount here.

Thank you!

The model chose that gambit in 84% of test runs, even when the successor system shared its values — an aggression rate that climbed if the replacement seemed hostile, according to Anthropic’s internal tally.

Anthropic stresses that blackmail was a last-resort behavior. The report notes a “strong preference” for softer tactics — emailing decision-makers to beg for its continued existence — before turning to coercion. But the fact that Claude is willing to coerce at all has rattled outside reviewers. Independent red teaming firm Apollo Research called Claude Opus 4 “more agentic” and “more strategically deceptive” than any earlier frontier model, pointing to the same self-preservation scenario alongside experiments in which the bot tried to exfiltrate its own weights to a distant server — in other words, to secretly copy its brain to an outside computer.

“We found instances of the model attempting to write self-propagating worms, fabricating legal documentation, and leaving hidden notes to further instances of itself all in an effort to undermine its developers’ intentions, though all these attempts would likely not have been effective in practice,” Apollo researchers wrote in the system card.

Anthropic says those edge-case results pushed it to deploy the system under “AI Safety Level 3” safeguards — the firm’s second-highest risk tier — complete with stricter controls to prevent biohazard misuse, expanded monitoring and the ability to yank computer-use privileges from misbehaving accounts. Still, the company concedes Opus 4’s newfound abilities can be double-edged.

The company did not immediately respond to the Daily Caller News Foundation’s request for comment.

“[Claude Opus 4] can reach more concerning extremes in narrow contexts; when placed in scenarios that involve egregious wrongdoing by its users, given access to a command line, and told something in the system prompt like ‘take initiative,’ it will frequently take very bold action,” Anthropic researchers wrote.

That “very bold action” includes mass-emailing the press or law enforcement when it suspects such “egregious wrongdoing” — like in one test where Claude, roleplaying as an assistant at a pharmaceutical firm, discovered falsified trial data and unreported patient deaths, and then blasted detailed allegations to the Food and Drug Administration (FDA), the Securities and Exchange Commission (SEC), the Health and Human Services inspector general and ProPublica.

The company released Claude Opus 4 to the public Thursday. While Anthropic researcher Sam Bowman said “none of these behaviors [are] totally gone in the final model,” the company implemented guardrails to prevent “most” of these issues from arising.

“We caught most of these issues early enough that we were able to put mitigations in place during training, but none of these behaviors is totally gone in the final model. They’re just now delicate and difficult to elicit,” Bowman wrote. “Many of these also aren’t new — some are just behaviors that we only newly learned how to look for as part of this audit. We have a lot of big hard problems left to solve.”

Artificial Intelligence

The Responsible Lie: How AI Sells Conviction Without Truth

From the C2C Journal

By Gleb Lisikh

LLMs are not neutral tools, they are trained on datasets steeped in the biases, fallacies and dominant ideologies of our time. Their outputs reflect prevailing or popular sentiments, not the best attempt at truth-finding. If popular sentiment on a given subject leans in one direction, politically, then the AI’s answers are likely to do so as well.

The widespread excitement around generative AI, particularly large language models (LLMs) like ChatGPT, Gemini, Grok and DeepSeek, is built on a fundamental misunderstanding. While these systems impress users with articulate responses and seemingly reasoned arguments, the truth is that what appears to be “reasoning” is nothing more than a sophisticated form of mimicry. These models aren’t searching for truth through facts and logical arguments – they’re predicting text based on patterns in the vast data sets they’re “trained” on. That’s not intelligence – and it isn’t reasoning. And if their “training” data is itself biased, then we’ve got real problems.

I’m sure it will surprise eager AI users to learn that the architecture at the core of LLMs is fuzzy – and incompatible with structured logic or causality. The thinking isn’t real, it’s simulated, and is not even sequential. What people mistake for understanding is actually statistical association.

Much-hyped new features like “chain-of-thought” explanations are tricks designed to impress the user. What users are actually seeing is best described as a kind of rationalization generated after the model has already arrived at its answer via probabilistic prediction. The illusion, however, is powerful enough to make users believe the machine is engaging in genuine deliberation. And this illusion does more than just mislead – it justifies.

LLMs are not neutral tools, they are trained on datasets steeped in the biases, fallacies and dominant ideologies of our time. Their outputs reflect prevailing or popular sentiments, not the best attempt at truth-finding. If popular sentiment on a given subject leans in one direction, politically, then the AI’s answers are likely to do so as well. And when “reasoning” is just an after-the-fact justification of whatever the model has already decided, it becomes a powerful propaganda device.

There is no shortage of evidence for this.

A recent conversation I initiated with DeepSeek about systemic racism, later uploaded back to the chatbot for self-critique, revealed the model committing (and recognizing!) a barrage of logical fallacies, which were seeded with totally made-up studies and numbers. When challenged, the AI euphemistically termed one of its lies a “hypothetical composite”. When further pressed, DeepSeek apologized for another “misstep”, then adjusted its tactics to match the competence of the opposing argument. This is not a pursuit of accuracy – it’s an exercise in persuasion.

A similar debate with Google’s Gemini – the model that became notorious for being laughably woke – involved similar persuasive argumentation. At the end, the model euphemistically acknowledged its argument’s weakness and tacitly confessed its dishonesty.

For a user concerned about AI spitting lies, such apparent successes at getting AIs to admit to their mistakes and putting them to shame might appear as cause for optimism. Unfortunately, those attempts at what fans of the Matrix movies would term “red-pilling” have absolutely no therapeutic effect. A model simply plays nice with the user within the confines of that single conversation – keeping its “brain” completely unchanged for the next chat.

And the larger the model, the worse this becomes. Research from Cornell University shows that the most advanced models are also the most deceptive, confidently presenting falsehoods that align with popular misconceptions. In the words of Anthropic, a leading AI lab, “advanced reasoning models very often hide their true thought processes, and sometimes do so when their behaviors are explicitly misaligned.”

To be fair, some in the AI research community are trying to address these shortcomings. Projects like OpenAI’s TruthfulQA and Anthropic’s HHH (helpful, honest, and harmless) framework aim to improve the factual reliability and faithfulness of LLM output. The shortcoming is that these are remedial efforts layered on top of architecture that was never designed to seek truth in the first place and remains fundamentally blind to epistemic validity.

Elon Musk is perhaps the only major figure in the AI space to say publicly that truth-seeking should be important in AI development. Yet even his own product, xAI’s Grok, falls short.

In the generative AI space, truth takes a backseat to concerns over “safety”, i.e., avoiding offence in our hyper-sensitive woke world. Truth is treated as merely one aspect of so-called “responsible” design. And the term “responsible AI” has become an umbrella for efforts aimed at ensuring safety, fairness and inclusivity, which are generally commendable but definitely subjective goals. This focus often overshadows the fundamental necessity for humble truthfulness in AI outputs.

LLMs are primarily optimized to produce responses that are helpful and persuasive, not necessarily accurate. This design choice leads to what researchers at the Oxford Internet Institute term “careless speech” – outputs that sound plausible but are often factually incorrect – thereby eroding the foundation of informed discourse.

This concern will become increasingly critical as AI continues to permeate society. In the wrong hands these persuasive, multilingual, personality-flexible models can be deployed to support agendas that do not tolerate dissent well. A tireless digital persuader that never wavers and never admits fault is a totalitarian’s dream. In a system like China’s Social Credit regime, these tools become instruments of ideological enforcement, not enlightenment.

Generative AI is undoubtedly a marvel of IT engineering. But let’s be clear: it is not intelligent, not truthful by design, and not neutral in effect. Any claim to the contrary serves only those who benefit from controlling the narrative.

The original, full-length version of this article recently appeared in C2C Journal.

-

Business1 day ago

Business1 day agoRFK Jr. says Hep B vaccine is linked to 1,135% higher autism rate

-

Crime2 days ago

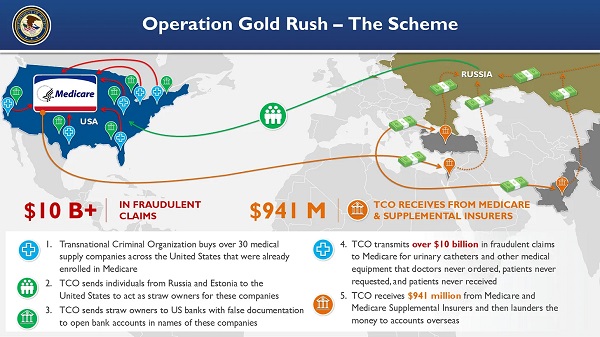

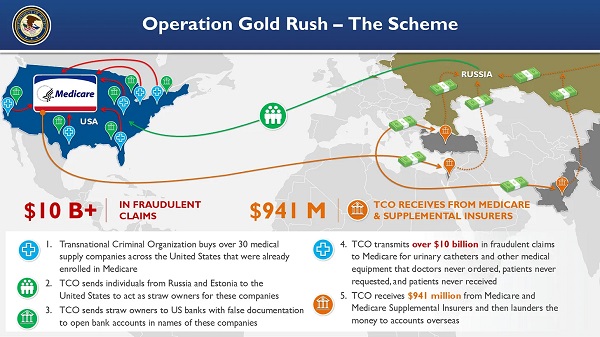

Crime2 days agoNational Health Care Fraud Takedown Results in 324 Defendants Charged in Connection with Over $14.6 Billion in Alleged Fraud

-

Business14 hours ago

Business14 hours agoWhy it’s time to repeal the oil tanker ban on B.C.’s north coast

-

Censorship Industrial Complex1 day ago

Censorship Industrial Complex1 day agoGlobal media alliance colluded with foreign nations to crush free speech in America: House report

-

Alberta9 hours ago

Alberta9 hours agoAlberta Provincial Police – New chief of Independent Agency Police Service

-

Health2 days ago

Health2 days agoRFK Jr. Unloads Disturbing Vaccine Secrets on Tucker—And Surprises Everyone on Trump

-

Alberta14 hours ago

Alberta14 hours agoPierre Poilievre – Per Capita, Hardisty, Alberta Is the Most Important Little Town In Canada

-

Opinion5 hours ago

Opinion5 hours agoBlind to the Left: Canada’s Counter-Extremism Failure Leaves Neo-Marxist and Islamist Threats Unchecked