Artificial Intelligence

AI is another reason why Canada needs to boost the energy supply

From Resource Works

Massive energy levels are required to keep up with AI innovations, and Canada risks being unable to do that

Artificial Intelligence is already one of the most important technologies of our time, and its development has been pushing innovation at a breakneck pace across huge swathes of the economy. Smart assistants now operate, albeit in a limited fashion, as secretaries for those who need help in the office, while autonomous vehicle capabilities keep improving.

It is a remarkable and world-changing time.

Just as one plays a video game, turns on a light, or starts up their car, AI requires energy. To say that AI’s appetite for energy is ravenous is an understatement, and Canadian governments must understand the challenge that comes with that.

Energy shortages are a growing threat to Canada’s economic security and, yes, our standard of living. Failure to keep up with demand means importing more energy at a cost, or facing energy blackouts, in which case Canada will fall behind in far more than just AI.

New AI models are seemingly rolling out every month, especially in machine learning and generative AI. OpenAI’s ChatGPT and Google’s Bard require huge levels of computing power to work. To train ChatGPT-4, an advanced language model, consumes thousands of megawatt hours of electricity, not incomparable to the energy usage of urban centres.

A single query made to ChatGPT requires ten times the energy of making a search on Google, revealing the massive needs of AI technology. AI is not just another internet search extension or downloadable app, it is an entirely new industry.

AI models are trained and run in data centers, which are central to this energy dilemma. The sheer power consumption in data centers is ballooning, and some estimates warn that the world’s data center energy demand will surge by 160 percent by 2030.

The International Energy Agency (IEA) has reported that AI and data centers already consume 1 to 2 percent of global electricity, a figure expected only to climb as more companies embrace AI-driven technology. As much as AI is driving digital innovation, it is also consuming electricity at a rate we will have to match.

Canada’s energy security is being seriously challenged by rising demand, with or without AI. Historically, Canadians have enjoyed the fruits of abundant, cheap energy generated by hydroelectricity in BC and Quebec, or nuclear power in Ontario. Times, and weather, have unfortunately changed.

A large and growing population, electrifying economies, and the weakening of Canada’s legacy energy sources are pushing the country to its limits regarding power supply.

The current federal government wants Canada to achieve net-zero emissions by 2050, which means that electricity is going to have to double in the next 25 years. Canada is already dealing with electricity shortages, such as in British Columbia, where demand for hydroelectricity is expected to rise 15 percent over the next six years. Manitoba is projecting a shortfall by 2029, while Ontario races to put up new nuclear power plants to avert an energy crisis by 2029 as well.

AI can help Canadians craft solutions to its incoming energy problems as a valuable research aid that can help with modeling and processing data. However, that will mean more energy consumption as part of the rogue wave of energy consumption that AI innovation has created.

As evidenced by the constant developments in AI, it is obvious that the technology is going nowhere, and neither are Canada’s energy shortfalls.

If AI is going to contribute to the surge in energy demand, then it only makes sense that it becomes a vital tool in the search for solutions, and we need those solutions now.

Artificial Intelligence

Apple faces proposed class action over its lag in Apple Intelligence

News release from The Deep View

| Apple, already moving slowly out of the gate on generative AI, has been dealing with a number of roadblocks and mounting delays in its effort to bring a truly AI-enabled Siri to market. The problem, or, one of the problems, is that Apple used these same AI features to heavily promote its latest iPhone, which, as it says on its website, was “built for Apple Intelligence.” |

| Now, the tech giant has been accused of false advertising in a proposed class action lawsuit that argues that Apple’s “pervasive” marketing campaign was “built on a lie.” |

| The details: Apple has — if reluctantly — acknowledged delays on a more advanced Siri, pulling one of the ads that demonstrated the product and adding a disclaimer to its iPhone 16 product page that the feature is “in development and will be available with a future software update.” |

|

| Apple did not respond to a request for comment. |

| The lawsuit was first reported by Axios, and can be read here. |

| This all comes amid an executive shuffling that just took place over at Apple HQ, which put Vision Pro creator Mike Rockwell in charge of the Siri overhaul, according to Bloomberg. |

| Still, shares of Apple rallied to close the day up around 2%, though the stock is still down 12% for the year. |

Artificial Intelligence

Apple bets big on Trump economy with historic $500 billion U.S. investment

Diving Deeper:

Apple’s unprecedented $500 billion investment marks what the company calls “an extraordinary new chapter in the history of American innovation.” The tech giant plans to establish an advanced AI server manufacturing facility near Houston and significantly expand research and development across several key states, including Michigan, Texas, California, and Arizona.

Apple CEO Tim Cook highlighted the company’s confidence in the U.S. economy, stating, “We’re proud to build on our long-standing U.S. investments with this $500 billion commitment to our country’s future.” He noted that the expansion of Apple’s Advanced Manufacturing Fund and investments in cutting-edge technology will further solidify the company’s role in American innovation.

President Trump was quick to highlight Apple’s announcement as a testament to his administration’s economic policies. In a Truth Social post Monday morning, he wrote:

“APPLE HAS JUST ANNOUNCED A RECORD 500 BILLION DOLLAR INVESTMENT IN THE UNITED STATES OF AMERICA. THE REASON, FAITH IN WHAT WE ARE DOING, WITHOUT WHICH, THEY WOULDN’T BE INVESTING TEN CENTS. THANK YOU TIM COOK AND APPLE!!!”

Trump previously hinted at the investment during a White House meeting Friday, revealing that Cook had committed to investing “hundreds of billions of dollars” in the U.S. economy. “That’s what he told me. Now he has to do it,” Trump quipped.

Apple’s expansion will include 20,000 new jobs, with a strong focus on artificial intelligence, silicon engineering, and machine learning. The company also aims to support workforce development through training programs and partnerships with educational institutions.

With Apple’s announcement, the U.S. economy stands to benefit from a major influx of investment into high-tech manufacturing and innovation—further underscoring the tech industry’s continued growth under Trump’s economic agenda.

-

2025 Federal Election2 days ago

2025 Federal Election2 days agoBREAKING: THE FEDERAL BRIEF THAT SHOULD SINK CARNEY

-

2025 Federal Election2 days ago

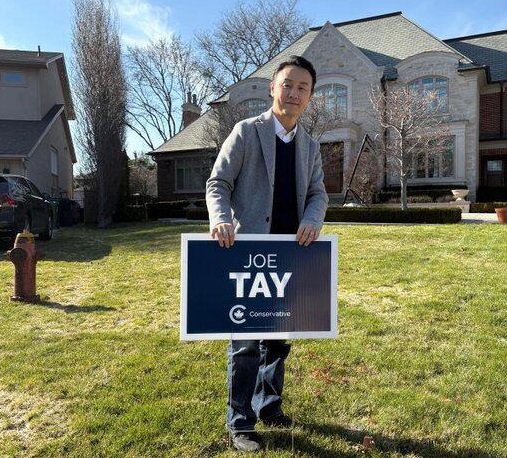

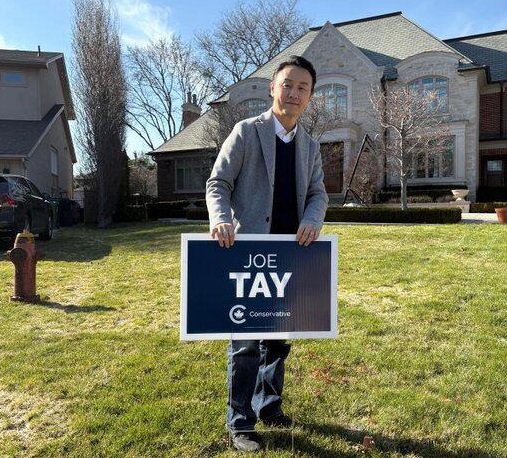

2025 Federal Election2 days agoCHINESE ELECTION THREAT WARNING: Conservative Candidate Joe Tay Paused Public Campaign

-

2025 Federal Election1 day ago

2025 Federal Election1 day agoMark Carney Wants You to Forget He Clearly Opposes the Development and Export of Canada’s Natural Resources

-

International1 day ago

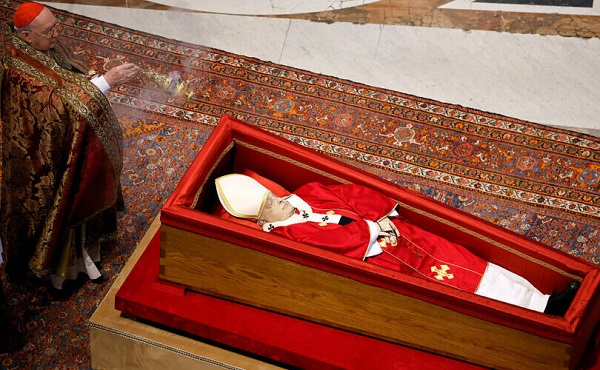

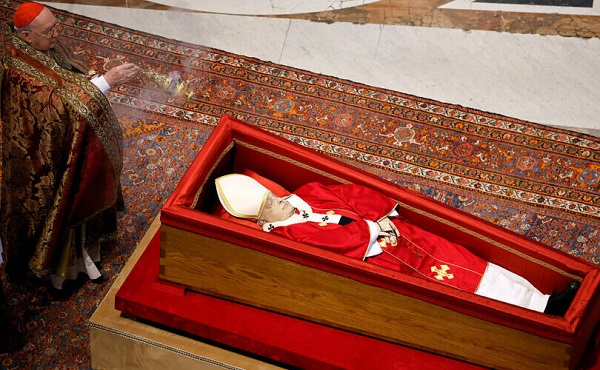

International1 day agoPope Francis’ body on display at the Vatican until Friday

-

Business2 days ago

Business2 days agoHudson’s Bay Bid Raises Red Flags Over Foreign Influence

-

2025 Federal Election1 day ago

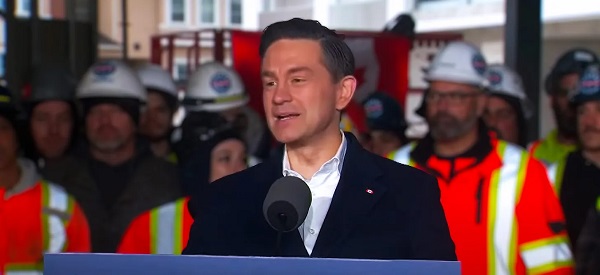

2025 Federal Election1 day agoCanada’s pipeline builders ready to get to work

-

2025 Federal Election16 hours ago

2025 Federal Election16 hours agoFormer WEF insider accuses Mark Carney of using fear tactics to usher globalism into Canada

-

International2 days ago

International2 days agoPope Francis’ funeral to take place Saturday