Now, 45 years later, comes announcement of a deal by tech giant Microsoft Corporation with Constellation Energy, owner of the infamous Three Mile Island facility, to restart the mothballed nuclear plant’s sister reactor, Unit 1. It will be the first such restart in the U.S.

Nuclear revival? Forty-five years after the infamous partial reactor core melt-down at Three Mile Island (pictured at top left and centre) and release of the sensationalistic anti-nuclear movie The China Syndrome (starring Jane Fonda, pictured at bottom left), the plant’s sister reactor is set for a US$1.6 billion restart to power data centres supporting artificial intelligence (AI). Shown at top right, Nuclear Regulatory Commission staff during Three Mile Island crisis; bottom right, U.S. President Jimmy Carter’s motorcade leaves Three Mile Island nuclear power station. (Sources of photos: (top left) zoso8203, licensed under CC BY 2.0; (top centre) AP Photo/Carolyn Kaster; (top right) NRCgov, licensed under CC BY-NC-ND 2.0; (bottom left) Everett Collection/The Canadian Press; (bottom right) NRCgov, licensed under CC BY 2.0)

Nuclear revival? Forty-five years after the infamous partial reactor core melt-down at Three Mile Island (pictured at top left and centre) and release of the sensationalistic anti-nuclear movie The China Syndrome (starring Jane Fonda, pictured at bottom left), the plant’s sister reactor is set for a US$1.6 billion restart to power data centres supporting artificial intelligence (AI). Shown at top right, Nuclear Regulatory Commission staff during Three Mile Island crisis; bottom right, U.S. President Jimmy Carter’s motorcade leaves Three Mile Island nuclear power station. (Sources of photos: (top left) zoso8203, licensed under CC BY 2.0; (top centre) AP Photo/Carolyn Kaster; (top right) NRCgov, licensed under CC BY-NC-ND 2.0; (bottom left) Everett Collection/The Canadian Press; (bottom right) NRCgov, licensed under CC BY 2.0)

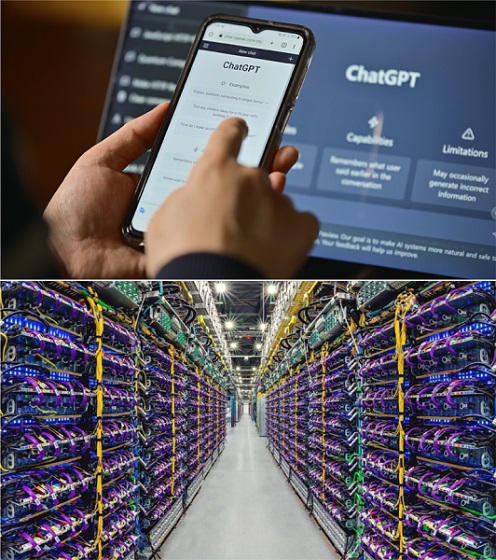

After all these years, why now? The answer is electricity demand for artificial intelligence (AI). Like many things in the tech realm, AI is a sneakily prodigious consumer of electricity, and AI’s use is exploding. The Microsoft/Constellation project is one of several such deals recently unveiled by tech giants.

A Goldman Sachs report from May of this year illuminates the issue, observing that, “On average, a ChatGPT query needs 10 times as much electricity to process as a Google search.” ChatGPT is a popular AI tool for information research and content creation (college kids particularly love it); a related and even more power-hungry tool spits out sophisticated digital imagery. And ChatGPT is only one of the burgeoning AI applications, which include everything from order processing and customer fulfillment to global shipping, generating sales leads, and helping operate factories and ports. Consequently, says Goldman Sachs, “Our researchers estimate data center power demand will grow 160% by 2030” – representing a remarkable one-third of all growth in U.S. electricity demand. “This increased demand will help drive the kind of electricity growth that hasn’t been seen in a generation,” says the report, which it pegs at a robust 2.4 percent per year during this period.

Power-hungry tech: The rise of AI tools like ChatGPT is forecast to increase power demand from data centres by 160 percent over the next six years, part of a robust expected increase in overall electricity consumption. Shown at bottom, Google data centre for the company’s Gemini AI platform. (Sources of photos: (top) Ju Jae-young/Shutterstock; (bottom) Google)

Power-hungry tech: The rise of AI tools like ChatGPT is forecast to increase power demand from data centres by 160 percent over the next six years, part of a robust expected increase in overall electricity consumption. Shown at bottom, Google data centre for the company’s Gemini AI platform. (Sources of photos: (top) Ju Jae-young/Shutterstock; (bottom) Google)

That’s a lot of juice. So where will all this additional power come from? In the U.S., 60 percent of electricity comes from natural gas and coal. Nuclear energy supplies 19 percent, hydroelectric facilities 6 percent, while wind and solar provide the remaining 14 percent. But wind and solar are intermittent, difficult to scale quickly, geographically limited – and, above all, cannot be counted on for the large-scale, uninterrupted, secure “base load” that AI requires.

The small modular reactor – a digital rendering of which is shown here – is said to offer great potential for adding nuclear power in manageable increments; the technology remains in testing, however, and is unlikely to hit the ground in Western Canada before 2034. (Source of image: OPG)

The small modular reactor – a digital rendering of which is shown here – is said to offer great potential for adding nuclear power in manageable increments; the technology remains in testing, however, and is unlikely to hit the ground in Western Canada before 2034. (Source of image: OPG)

And while there is something of a nuclear revival happening in the U.S. and around the world, it will be four years before Three Mile Island comes back on-stream (at an anticipated cost of US$1.6 billion). Such a time-frame even to restart an existing facility underscores the long lead times afflicting the design, construction and commissioning of any technically complex, large-scale and politically controversial infrastructure. There’s a lot of talk about shortening that cycle by focusing on a new generation of “small modular reactors” (SMR), which generate about one-quarter the power of the regular kind. But SMRs remain largely untested and, here too, their lead times are long. Alberta and Saskatchewan, for example, have been talking with other provinces for the last four years about the concept, but haven’t even begun writing the governing regulations, let alone holding public hearings. The most optimistic scenario has the first SMR coming online in 2034.

Realistically, then, most of the growth in power demand for AI will have to be met by fossil fuels, however distasteful this will be to America’s tech moguls, who want to be seen as hip and earth-friendly even if not all of them are actually left-leaning. (A laughable detail of the recent Constellation/Microsoft deal is that Three Mile Island is being renamed the “Crane Clean Energy Center”, as if it’s some kind of Google-style campus.)

Canada’s growing electricity demand, much of it driven by AI and other tech requirements, will also need to be fuelled by natural gas. Fortunately, Canada too has enormous untapped natural gas reserves, and is also setting new production records.

Those tech moguls will have to come to terms with natural gas. Natural gas is by far the lowest-emission fossil fuel. It is readily transportable by pipeline around North America. Large-scale gas-fired generating facilities can be built quickly, at reasonable cost and at low risk using mature technology, and can be located almost anywhere. And, fortunately for Americans, natural gas is in robust supply, with production setting new records nearly every year, and is currently cheaper than dirt. Indeed, the Goldman report itself forecasts (too conservatively, in my view) that the growth in electricity demand will in turn trigger “3.3 billion cubic feet per day of new natural gas demand by 2030, which will require new pipeline capacity to be built.”

In Canada, 60 percent of our electricity comes from hydro power, but very few viable new dam sites are left (Quebec recently commissioned a new dam after years of delay, and does have a few additional candidate sites, but these are the rare exceptions). Ontario’s nuclear plants supply 16 percent. Expansion of this is under consideration but, as noted, any new capacity is many years away. Coal and coke supply 8 percent (and are being further scaled back), natural gas 8 percent, and solar and wind 6 percent. So Canada’s growing electricity demand, much of it driven by AI and other tech requirements, will also need to be fuelled by natural gas. Fortunately, Canada too has enormous untapped natural gas reserves, and is also setting new production records.

Plentiful, flexible, transportable, cheap: The lowest-emission fossil fuel, natural gas offers the best way to meet growing global energy demand, representing an enormous export opportunity for Canada and the U.S. Shown at top left, Freeport LNG Liquefaction facility, Freeport, Texas; top right, LNG Canada project under construction in Kitimat, B.C. (Sources: (top left photo) Freeport LNG; (top right photo) The Canadian Press/Darryl Dyck; (graph) Canadian Energy Regulator)

Plentiful, flexible, transportable, cheap: The lowest-emission fossil fuel, natural gas offers the best way to meet growing global energy demand, representing an enormous export opportunity for Canada and the U.S. Shown at top left, Freeport LNG Liquefaction facility, Freeport, Texas; top right, LNG Canada project under construction in Kitimat, B.C. (Sources: (top left photo) Freeport LNG; (top right photo) The Canadian Press/Darryl Dyck; (graph) Canadian Energy Regulator)

In contrast to the United States and Canada, Europe is struggling just to meet existing electricity demand after natural gas imports from Russia dropped from 5.5 trillion cubic feet in 2021 to 2.2 trillion cubic feet last year. Europe’s only option is importing liquefied natural gas (LNG). Germany, previously the largest importer of Russian gas – and which in the face of the resulting energy shortage chose to shut down the last of its nuclear plants – is constructing LNG import/regasification terminals on an urgent basis. Regrettably, the situation could get even worse for Europe; China is in talks with Russia that could lead to complete stoppage of remaining gas flows, further escalating Europe’s need for LNG.

After spending a year of assessment, management concluded that AI was key to the company’s future. Thomson Reuters pledged to spend US$100 million annually to develop its AI capacity. Knowing that this is the cost for just one medium-sized Canadian company puts into perspective the potential scale of AI’s electricity-hungry global growth.

That makes meeting the electricity demands of the EU’s smaller but also growing AI sector even more challenging. Moreover, Europe’s power grid is the oldest in the world at 50 years, so it needs both modernization and expansion. The above-quoted Goldman Sachs report states that, “Europe needs $1 trillion [in new investment] to prepare its power grid for AI.” Goldman’s researchers estimate that the continent’s power demand could grow by at least 40 percent in the next ten years, requiring investment of US$861 billion in electricity generation on top of the even higher amount to replace those old transmission systems. The situation is complex and challenging, but one thing is clear: the electricity Europe requires for AI can be fuelled in large part only by natural gas imported from friendly countries.

The AI frenzy may still seem incomprehensible to most Canadians, so it’s important to understand how its applications are spreading through more and more of the economy. Toronto-based Thomson Reuters is a well-known company that provides data and information to professionals across three main industries: legal, tax & accounting, and news & media. A recent Globe and Mail article about Thomson Reuters’ journey from reticence to embrace of the AI world provides helpful perspective. After spending a year of assessment, management concluded that AI was key to the company’s future. Thomson Reuters pledged to spend US$100 million annually to develop its AI capacity. Knowing that this is the cost for just one medium-sized Canadian company puts into perspective the potential scale of AI’s electricity-hungry global growth.

More juice needed: As many more companies – like Toronto-based information conglomerate Thomson Reuters – come to understand the need to embrace AI technology, the global appetite for electricity will continue to grow, demand that will only increase with the further advancement of cryptocurrencies and electric vehicles. (Sources of photos: (left) The Canadian Press/Lars Hagberg; (right) Shutterstock)

More juice needed: As many more companies – like Toronto-based information conglomerate Thomson Reuters – come to understand the need to embrace AI technology, the global appetite for electricity will continue to grow, demand that will only increase with the further advancement of cryptocurrencies and electric vehicles. (Sources of photos: (left) The Canadian Press/Lars Hagberg; (right) Shutterstock)

Almost forgotten in the electricity-devouring list are cryptocurrencies. In 2020-21 Bitcoin “mining” (the data centres that compete to solve the encrypted blockchains as quickly as possible) consumed more electricity than the 230 million people of Pakistan. Meeting the tech sector’s voracious and – if the growth forecasts are accurate – essentially insatiable demand for electricity will be challenging enough, but there’s another major source of electricity demand growth: electric vehicles (EVs). An International Energy Agency report estimates that EV power needs in the U.S. and Europe will rise from less than 1 percent of electricity demand today to 14 percent in 2030 if electric vehicle mandates are to be met. This C2C article examines the specific implications for Canada.

Who could have imagined that these celebrated new technologies – billed as clean, green and “sustainable” – would end up being the biggest drivers of fossil fuel growth! With our incredible endowment of accessible natural resources, our nation should seize this enormous natural gas export opportunity by getting rid of the bureaucratic time-consuming processes and other roadblocks that have so long discouraged getting new LNG export terminals built and operating.

Gwyn Morgan is a retired business leader who was a director of five global corporations.